The Journey to EU Isolation

technical Jack Ellis · Nov 30, 2021Update: On January 13, 2022, the Austrian DPA ruled that Google Analytics are illegal. Luckily, this is exactly why we put EU Isolation in place, to protect our customers against rulings like these.

Back in July 2020, businesses across the world were experiencing anxiety as The Court of Justice of the European Union (CJEU) delivered the Schrems II ruling against Facebook, invalidating the EU-US privacy shield. This meant that thousands of companies no longer had a valid, legal transfer mechanism to move EU citizens’ personal data (IP address, full name, address, etc.) to US-owned cloud providers such as Amazon Web Services (AWS) or DigitalOcean.

The ruling stated that companies could still use the SCCs; however, they also had to implement supplementary measures to protect the data. A while after the ruling, the EDPB publicly stated that they are “incapable of envisioning an effective technical measure to prevent that access from infringing on the data subject’s fundamental rights“ with regards to US cloud providers.

This ruling didn’t just cause panic for legal teams, who were frantically looking for ways to comply with the ruling and deal with questions from their customers; this also caused enormous problems for technical teams across the globe. Suddenly they had to review their infrastructure and find a legal way to continue transferring personal data to US cloud providers.

Under the GDPR, the IP address (which every internet request sends by default) is considered personal data. This meant that if you were processing any website traffic from EU data subjects (on US-owned cloud providers) without adequate supplementary measures, you were violating the GDPR and could face a hefty fine.

What on earth can we do?

We were not immune to this. We heard about this ruling from our EU-based privacy officer, Rie, along with our lawyer in Canada. We knew we needed to do something about this, and we wanted to respond quickly.

At the time, every request to our customers’ websites was recorded via our US infrastructure, meaning we would temporarily process the visitor’s IP and User-Agent. We only stored anonymized data in our database, but we still process that data on US cloud providers, so things had to change.

We immediately moved to build a feature that would later become EU Isolation. I initially thought we’d deploy EU servers via AWS or DigitalOcean, as they have options available in Germany. The problem there was that US entities still controlled those servers. Therefore there’d be no data transfer out of the EU. This meant that, under FISA and EO12333, the US government could still get access from AWS or DigitalOcean to our EU-located servers, as they were the ones with the control. They could do this secretly, which wouldn’t solve the issues brought forward by the Schrems II ruling.

Round one of EU Isolation

We’ve very publicly spoken about how we prefer to use serverless technology. We run on Laravel Vapor, which deploys everything to AWS and is pretty fantastic. But we couldn’t use AWS. We had to use EU infrastructure, which meant we had to leave serverless behind for this project.

I spent a few weeks building out an initial version of EU Isolation. It ran Swoole PHP (this was before Laravel Octane existed), and we got some unbelievable performance for fantastic prices. All was going well, but then we got distracted by someone attacking our company. This took up a considerable amount of time and energy, as we learned how to defend ourselves against these attacks.

Ultimately, once these attacks were under control, we got pulled into other things, and we never fully finished EU Isolation. Part of it was related to the fact that we were waiting on advice from the EDPB (European Data Protection Board) on what they were expecting from us. After all, did they expect everyone to move to EU-owned cloud providers, leaving their US-owned providers behind? Was this the start of a potential tech trade war? Surely not.

New SCCs

Around a year later, when the new SCCs were announced, we were confused. How on earth does adding some clauses to your Data Processing Agreement suddenly protect EU data from US government spying (FISA and EO12333)? It doesn’t. It’s absolute nonsense. Privacy professionals were completely confused over this, and many people incorrectly believe that they’re compliant after adding these new SCCs.

The new SCCs don’t solve the issues, and, as we said above, the EDPB stated that they’re “incapable of envisioning an effective technical measure to prevent that access from infringing on the data subject’s fundamental rights“ with regards to US cloud providers.

We spoke with our privacy officer and lawyer again, as we wanted to make sure that we understood all of this, and it became clear that we needed to finish EU Isolation. It was the only way forward. We would complete the dream we had a year ago, and we’d deliver the compliance that our users deserve. Small businesses have a lot to worry about as it is; let’s not have them worrying about whether their website analytics are breaking the law.

The GOAT stack

Our stack had changed from a year ago. We were previously planning to have users opt-in to EU isolation by changing the endpoint they used (e.g. cdn.usefathom.com would become eu.usefathom.com). But we decided we could do better, and we were going to focus on intelligently routing their website visitors automatically.

Our vendor plan was as follows:

Bunny.net

We’d utilize BunnyCDN, an EU-owned, globally available CDN for every single person on the planet. We liked the company, and we also liked the idea of working with a small but mighty business. They weren’t fans of big tech either, and they provided an incredible product. The legal reasoning here was that, as an EU company, Bunny is not subject to US government spying laws.

Hetzner Cloud

We’d bring in Hetzner Cloud, a product provided by Hetzner, a company based in Germany. We’d over-provision the servers, run them across multiple regions and route all EU data subjects here. Plus, we had a contact, Lukas Kämmerling, who worked there full-time, and was willing to do contract work to help get us set up.

AWS Route53

We’d bring in Route53 to handle our DNS, as we needed DNS failover with health checks. We had initial concerns about the DNS service being US-owned, but we learned that the IP the end DNS server would see would a) be truncated to only the first three octets (i.e. 123.123.123. [redacted]) and b) The IP that the DNS servers would see would belong to Bunny. The only vulnerability identified was DNS modification, which we could solve by implementing 24/7, EU-based DNS monitoring.

GitLab (self-hosted)

We’d self-host GitLab on German-owned servers, which would hold our secret encryption key (more on this later) because we couldn’t keep our code/secrets on GitHub (US company). We’re not typically fans of self-hosting, and we self-host nothing, but luckily we have access to a DevOps genius who is more than happy to self-host this for us.

Sentry (self-hosted)

We’d need to track errors, and our Sentry subscription was US-controlled, so we couldn’t use that. Fortunately, Sentry lets you self-host, so we put that on German-owned servers too.

AWS

We would keep serverless AWS in place for our underlying infrastructure. That meant keeping our US firewall, US servers and US database. And this wouldn’t be a problem because the US servers would never receive EU personal data.

Putting it all together

Now we had the stack planned out; it was time to piece it all together. This would happen in multiple steps.

Step one

The first step was to move our base CDN (cdn.usefathom.com) over to Bunny, with our S3 bucket as the origin. The CDN served assets, so this was a walk in the park. As a first step towards EU Isolation, I also created a rule to remove the X-Forwarded-For value (which holds the visitor’s IP address) before the request hits S3, ensuring no personal data enters S3 (a US-owned service).

Step two

The second step was to move our ingest points over. This would be somewhat challenging, as we previously had everything hitting our Vapor set-up, which was deployed behind an Elastic Load Balancer (AWS) and Web Application Firewall (WAF). I also refer to these points as “collector” points, and they’re the endpoints that receive data from pageviews and events on our customers’ websites.

We set up a new Laravel Vapor environment with the same application codebase and created a new firewall in WAF. We weren’t focused on EU Isolation; we were just focused on moving the ingest point. We configured the firewall on AWS to block on X-Forwarded-For, which Bunny passed through for everyone, and we configured the firewall to require a secret header from Bunny, to prevent direct DDoS attacks on the load balancer.

Once that was all set up and configured, I set up a test rule on Bunny to make sure everything passed through as expected. All traffic was now passing through Bunny, and Bunny was now processing our ingest too. This was huge because it reduced global TTFB (time to first bite). So instead of your users in Germany hitting our US load balancer directly, relying on the speed of their internet connection, they’d now hit our CDN in Germany, which would then reverse proxy to our US servers using it’s enterprise grade uplink (much faster than consumer internet connections).

Step three

We now had everything working on Bunny, but EU IP addresses were still being forwarded to our US-controlled infrastructure. This wasn’t compliant with the Schrems II ruling, and we needed to implement our EU Isolation methodology as soon as possible.

To do this, our friend at Hetzner, Lukas, put together three proxy clusters set up to handle close to 100,000 req/s. The proxy clusters would take the personal data (IP address) when receiving a request, hash it up with a secret key (only located in the EU) before sending it to the US. This would make it impossible for the US-based provider to decrypt and identify an individual. In addition to that, we would perform our usual hashing on the US side, converting it to anonymized data.

Our EU isolation infrastructure was deployed to Hetzner, sitting behind managed load balancers, and it distributed traffic between three regions:

- Nuremberg, Germany

- Falkenstein, Germany

- Helsinki, Finland

This was fantastic, and we were ready to roll. My only experience with multi-region availability was AWS Global Accelerator, and that just wasn’t an option here. We tried a handful of things and ultimately landed on Route53.

We set up a DNS entry at Hetzner, which Bunny would route EU traffic to, and then we set up an A record in Route53 that returned multiple values. Those multiple values were managed by three underlying health checks that ran every 30 seconds. If our DNS health checks detected that one of our three clusters was unavailable, it was removed from the DNS records until it was available again.

But what happens if the DNS was cached by Bunny when one of our clusters fell offline? After all, the lowest TTL we could set was 60 seconds. Well, that’s where this “multi-value” DNS approach comes in handy. The client will try the first IP it receives and, if it doesn’t get a response, it tries the second.

Bunny caches our DNS entry for a maximum of 60 seconds. And if our health checks, which run every 30 seconds, detect an issue, Bunny will receive the available IP addresses when it bypasses the cache and hits our DNS servers again. And if one cluster is offline, Bunny will receive 2 IPs instead of 3. Then when the cluster returns, it’ll receive three again. Beautiful.

We deployed everything, and things went very smoothly.

What does this look like?

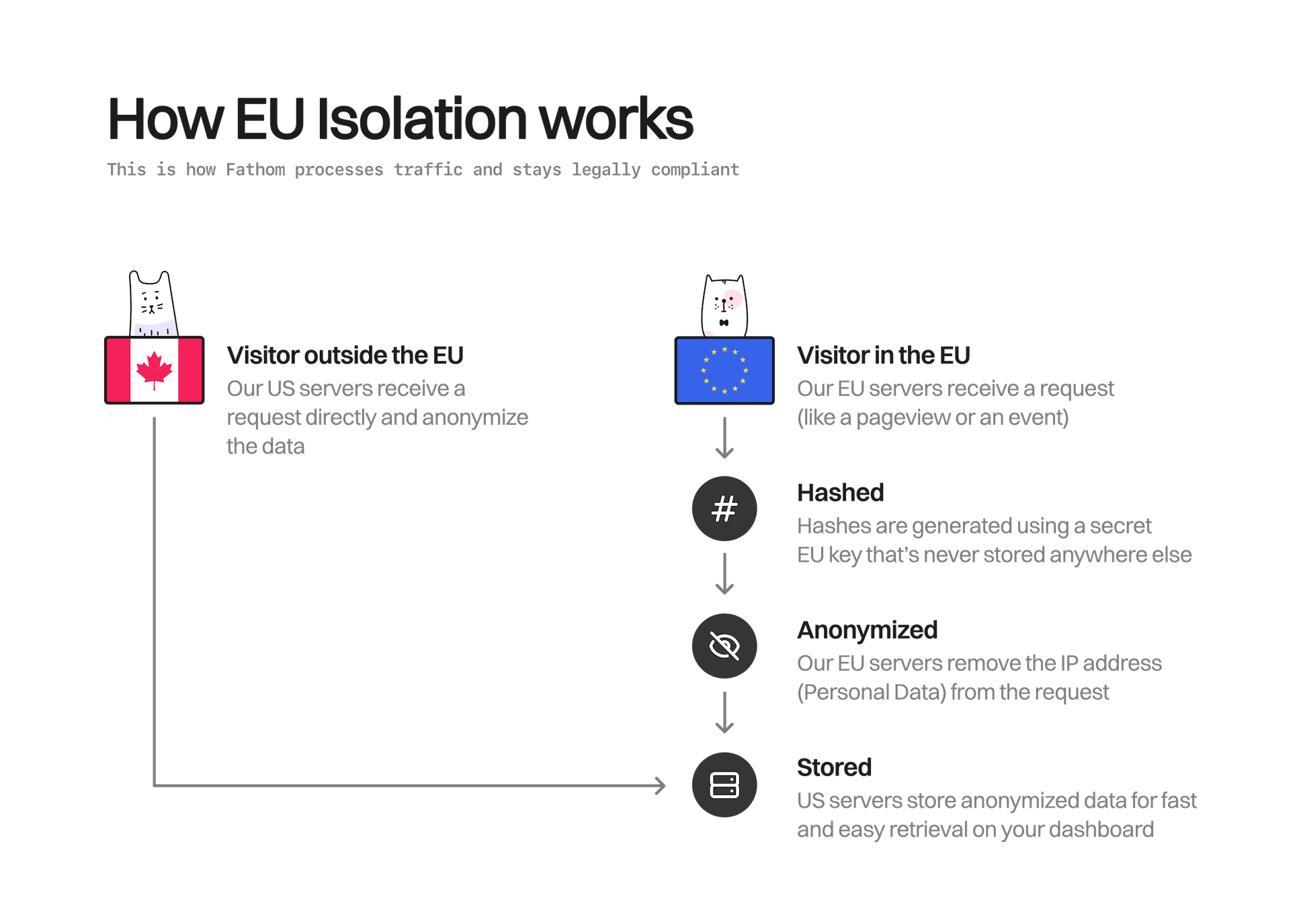

After finishing the infrastructure migration and deploying EU isolation, this is how our system looked.

The wrap-up

We realize that a lot of this sounds like paranoia. The US government tapping a small Canadian company’s infrastructure? Ridiculous, right? And we agree that it does seem unlikely. However, regardless of our opinion on FISA/EO12333, or the US government, we still have to comply with the GDPR, which means we must take Schrems II seriously. This wasn’t an “anti-government” move in any way, shape or form; it was done to comply with ever-changing GDPR requirements—our aim is always to provide truly privacy-first analytics, and that means complying with privacy laws.

Fathom is the only globally distributed provider that offers EU Isolation while still offering globally distributed architecture. We’ve seen analytics companies go all-in on hosting their infrastructure in the EU, but that slows things down for website visitors outside of the EU, and nobody wants a slow-loading website.

To our US and Canadian customers, don’t worry; we’ve got your back. And to our EU customers (or potential customers reading this), your legal team is going to love Fathom, so send them to our compliance section and try Fathom Analytics for free.

Looking for some Laravel Tips? Check out Jack's Laravel Tips section here.

You might also enjoy reading:

BIO

Jack Ellis, CTO

Recent blog posts

Tired of how time consuming and complex Google Analytics can be? Try Fathom Analytics: